Since there is no complete out of the box functionality for rebuilding the Sitecore Xdb collection based on all historical data within your sql shards we received a hotfix from Sitecore to backport this functionality from 9.0.2. to 9.0.1. In case you are in need of this hotfix, please reach out to Sitecore and reference: “SC Hotfix 232561-2”.

Good news. We’ve made a backport of the fix from the 9.0.2 to 9.0.1

You can find it here:

https://dl.sitecore.net/hotfix/SC%20Hotfix%20232561-2.zip

Be aware that the hotfix was built specifically for 9.0.1, and you should not install it on other Sitecore versions or in combination with other hotfixes unless explicitly instructed by Sitecore Support.

The fix should be applied to the application serving your “xConnect Collection Search Service” and the related webjob/service “xConnect Search Indexer” also known as the IndexWorker.

After applying the hotfix and triggering a rebuild using kudu, we noticed the following log entry:

2019-03-20 11:00:10.801 +00:00 [Error] An error occured.

System.Exception: Failed to repeat processing: key: 5a5e965b-2d7c-0000-0000-0583c1f579f6 msg: Field ‘facets_keybehaviorcache_pageevents.data_s’ contains a term that is too large to process. The max length for UTF-8 encoded terms is 32766 bytes. The most likely cause of this error is that filtering, sorting, and/or faceting are enabled on this field, which causes the entire field value to be indexed as a single term. Please avoid the use of these options for large fields.

at Sitecore.Xdb.Collection.Search.Azure.Processing.DataFeedsProcessing.d__13.MoveNext()

(* to increase your loglevel, edit the following file : “D:\home\site\wwwroot\App_data\jobs\continuous\IndexWorker\App_data\Config\Sitecore\CoreServices\sc.Serilog.xml” and set your MinimumlLevel DefaultValue to ‘Information’ )

(* to check you xcSearch IndexWorker logs within kudu go to the following directory:

“D:\local\Temp\jobs\continuous\IndexWorker\saaglmx2.h32\App_data\Logs”)

The cause of this exception lies in the fact that too large strings are being stored within the SQL shards. Azure Search is not able to handle the size of these records. Solution can be found within hotfix “SC Hotfix 304701-1”. Which assures that large strings are being truncated before the IndexWorker aggregates the data into your Azure Search Xdb collection.

So after applying the second hotfix we were finally able to rebuild the Xdb collection (including the Xdb-secondary mechaniscm). Whenever you apply the hotfix, please make sure that you stop and start the webjob + the app service. Be aware that you can stop the App Service while the webjob keeps running, so restart them both!

When restarted, you can remove the old Xdb collection within Azure Search. It is now safe to trigger the rebuild:

“D:\home\site\wwwroot\App_data\jobs\continuous\IndexWorker>.\XConnectSearchIndexer.exe -rr”

[ in some cases it might help to trigger the rebuild from the following location: "

“D:\local\Temp\jobs\continuous\IndexWorker\RANDOMID\ " ]

The rebuild will create the new Xdb and Xdb-secondary collections and as time passes by the document count within your collection will increase based on the available data within your shards.

*Tips beneath come from Martin Ray English - thanks for this!

[ https://sitecoreart.martinrayenglish.com/2019/09/sitecore-xdb-optimizing-your-xdb-index.html ]

Bare in mind that reducing the size of your indexer batches might affect your results and rebuild time positively as well. I had great results on settings the batch size to ‘500’.

“App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\SearchIndexer\sc.Xdb.Collection.IndexerSettings.xml "

I set the SplitRecordsTreshold to 12000 (instead of default 25000) :

App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\SearchIndexer\sc.Xdb.Collection.IndexerSettings.xmlAnd the ParallelizationDegree to 1 (instead of 4)

\App_Data\jobs\continuous\IndexWorker\App_data\config\sitecore\SearchIndexer\ sc.Xdb.Collection.IndexWriter.AzureSearch.xml

Hope this post will help you when you are stuck with the current rebuilding mechanism in Sitecore 9.0.1.

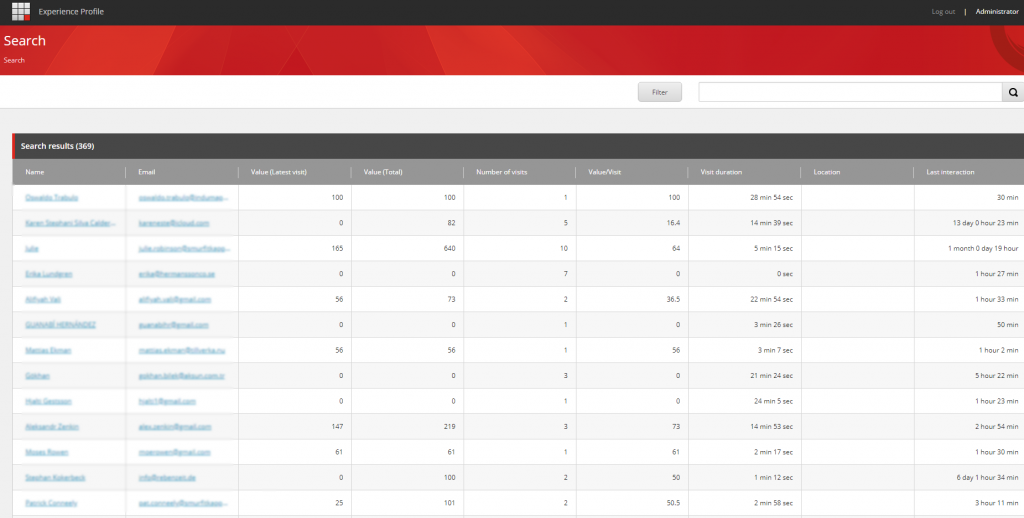

Finally - identified user data visible within the Experience Profile (Y)!